ok, thanks, I will try.

But I want real time, what should I put for the time ? now ?

You will get data at least 5 seconds late I think. You can put from_utc just a few minutes back and end_utc as current time.

I guess, I haven’t tried but should work?

thanks, it is working well. I just put my own token end own ID.

i had just change the seconde time value in 2now"

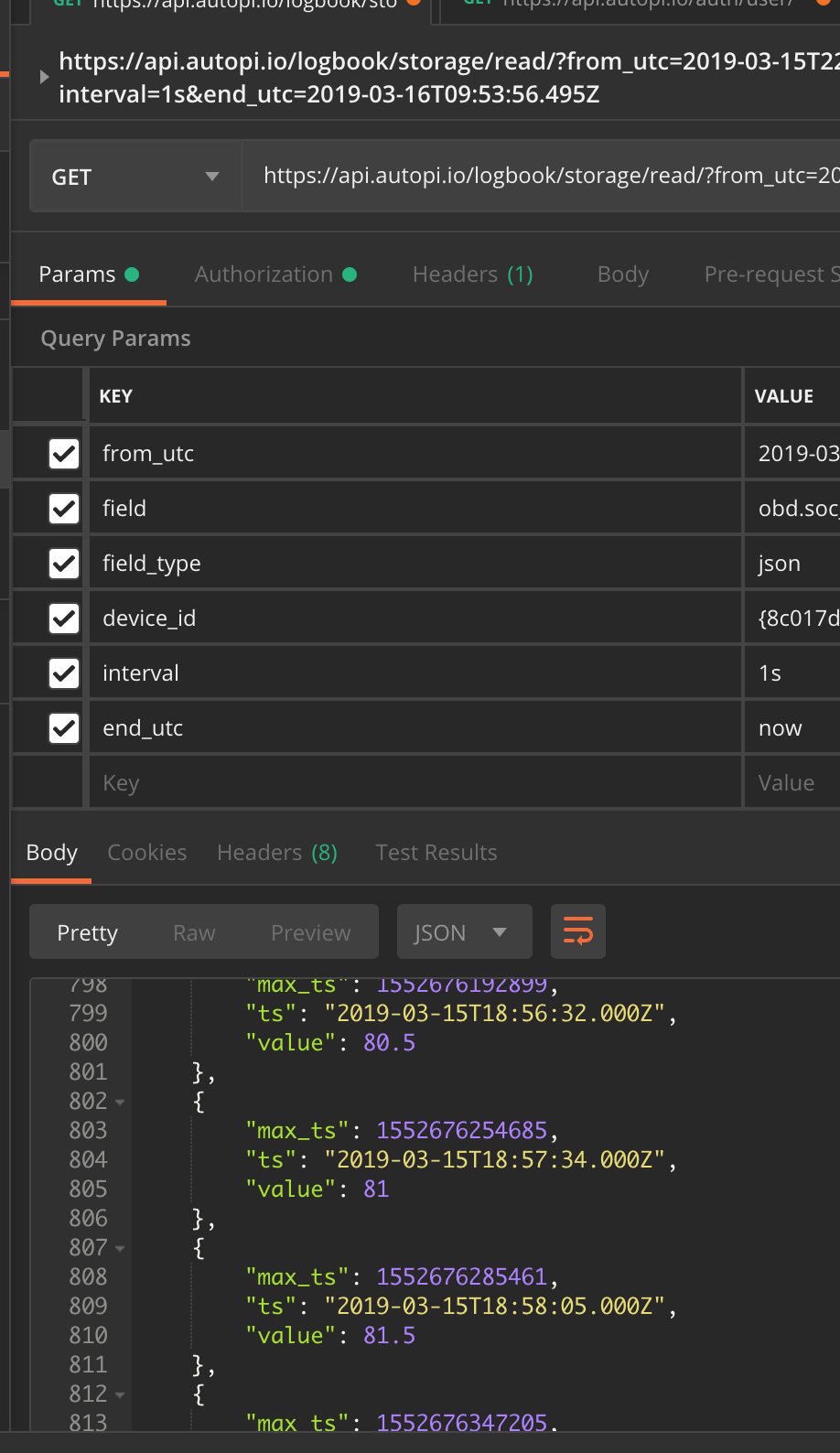

this is the screen shoot.

now I want to do the request from my web site, from a php or json page. then when I have the data, send the last one to mysql. (I have web app for the Kona I want to use  )! but I dont know how to write same get as postman but in my web page.

)! but I dont know how to write same get as postman but in my web page.

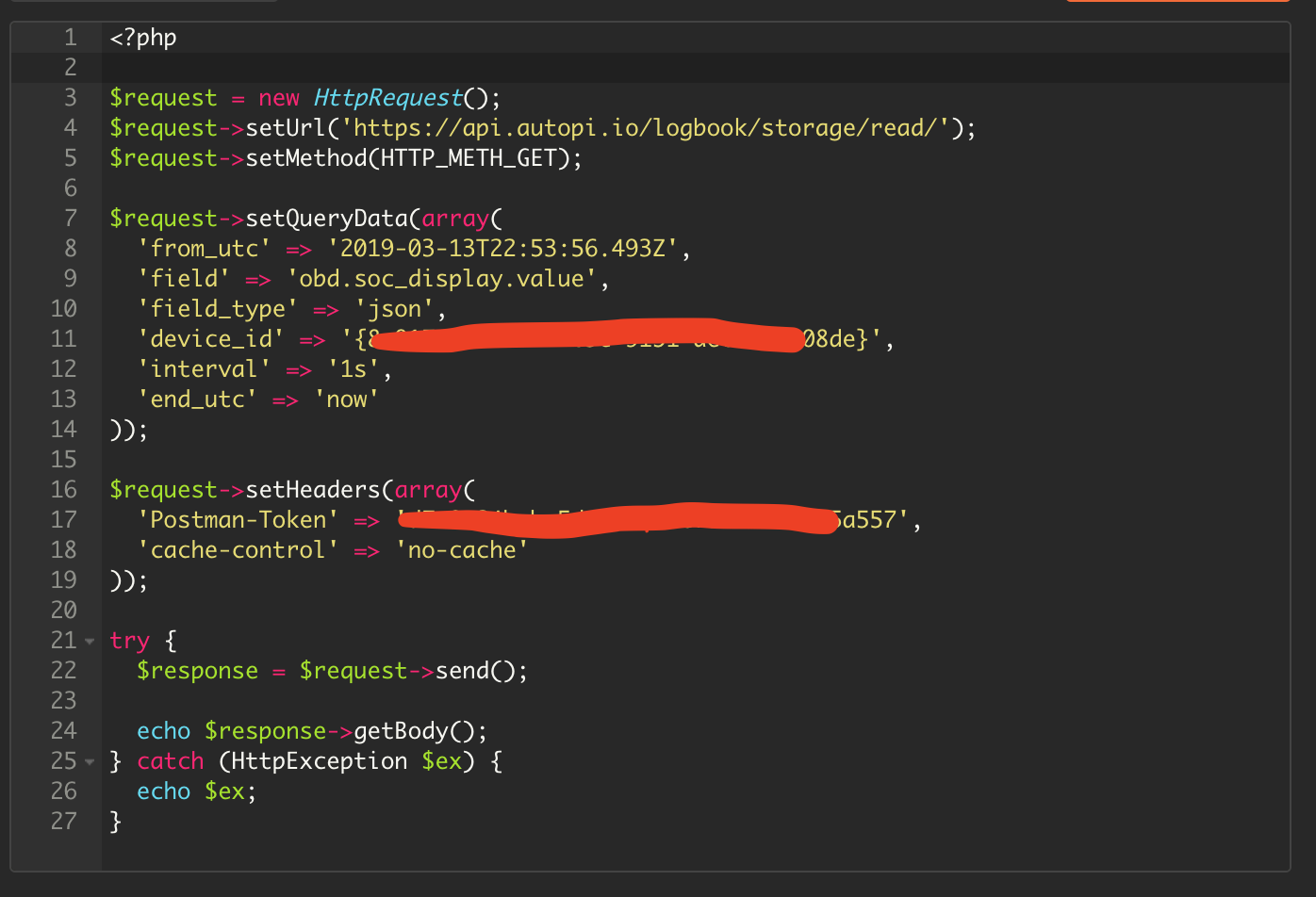

I try to write this in php, but still have error.

@Remy_Tsuihiji I suspect your problem there is you’re sending “Postman-Token”, the one you want is “Authentication”, where the value should be “bearer my-api-key”.

ok, all is wrong… mmmmmm, I don’t know how to do. I should study

So I’m starting to get some data out of the API, but some of the details arn’t all that clear. So some questions -

- In /logbook/elasticsearch what are the

keyand typefields? - In /logbook/raw what is the

data_typefield ? I’ve not been able to get any data from this operation so far - In /logbook/storage/data what are the

keyandtype

fields ? type doesn’t seem to be the same type as returned from /logbook/storage/fields/ - Any reason to use both

from_utcandstart_utcfield names ? - Is /logbook/strorage/read processed data ?

- How can I get multiple logger data for the same timestamps ?

Thanks

Hi, I’m trying the login request but I’m getting a security error saying:

The page at ‘https://api.autopi.io/’ was loaded over HTTPS, but requested an insecure resource ‘http://backend01/auth/login/’. This request has been blocked; the content must be served over HTTPS.

Is it a problem on your side or am I doing something wrong here?

Hi, I can not log into the API page using the guide in the first post, point 2, Ill just get an “undefined” when typing my email and password. However i can log in using the Postman and thru the Node-red code given in another thread here.

Can anyone give me a hint what I am doing wrong?

Hi @sysdev, @Andreas_Nygren and @Marianne_Diniz

There is an error in the interactive part of our current API documentation.

But we are days away from releasing a swagger based replacement.

I suggest that you use postman to make the requests instead.

If you find something that is not adequately described in the API documentation, please let us know  We are working on improving the documentation all over.

We are working on improving the documentation all over.

Best regards

/Malte

Hi @Malte,

I accessed via postman, but I’m the same doubt as @plord how can I get multiple logger data for the same timestamps?

Also, if autopi is not communicating the data is stored locally and then sent to the cloud?

Hi Peter

-

Endpoint

elasticsearchandstorage/dataand 3 are the same, theelasticsearchendpoint was an alias that has since been removed.The

typecan beposition,accelerometerorprimitive.

The key can berpi-temperature,voltage,coolant_temp,engine_load,fuel_level,fuel_rate,intake_temporspeed.Those values correspond to a search query in our storage backend, but we have since started migrating to a more generic interface, where the search query is built by the frontend instead, so that you guys can retrieve all your custom data as well.

We are in the process of cleaning up the API, to make it much easier to understand. Like there should not be two different read endpoints.

Sorry about the confusion

-

The

data_typefield is used to query in our storage backend. All data that is inserted into our storage system will have an assigned datatype, this datatype will be automatically set to the name of the module that returned the data.So if you have a module

my_module, which has a function calledx_value, and you execute that in a job, with the returner set tocloud, then the result will be inserted with a datatype ofmy_module.x_value -

See 1

-

Absolutely not, the naming should be consistent. I will see what I can do about that.

-

The storage/read endpoint returns data from storage, the same as the storage/data endpoint, so I would say yes, but I’m not sure if that’s what you mean by processed data?

-

All data is logged with the exact timestamp, so they will likely all have slightly different timestamps.

And we currently don’t have a single API call that can return multiple fields, but I expect that the current read endpoint will have that functionality at some point, but due to the slightly different timestamps, this would likely be implemented using aggregations, where the time range is split into smaller groups, and then values in each group is aggregated.I hope that answers the question, if not, let me know.

We really appreciate all questions and suggestions, and it all helps us prioritize and shape our backlog. I’m sorry about the late reply.

Best regards

/Malte

Hi Marianne

Yes, the device will log the data, and then continually upload the data to the cloud, if it for some reason lose network connection, the data is still stored on the device, and will be uploaded when it gets a connection later.

Best regards

/Malte

Many thanks for your reply.

So yesterday I drove to london and back, I have obd.driving_power logged every 10 seconds, but running a logbook/storage/read on this I see data only every 1 or 2 hours -

curl --silent --header "Content-Type: application/json" --header "Authorization: bearer ${token}" "https://api.autopi.io/logbook/storage/read/?device_id=${deviceid}&field_type=primitive&field=obd.driving_power.value&from_utc=2019-05-05T08:00" | jq '.'

[

{

"max_ts": 1557050389055,

"ts": "2019-05-05T09:00:00.000Z",

"value": 6.765374940931797

},

{

"max_ts": 1557052632629,

"ts": "2019-05-05T10:00:00.000Z",

"value": 2.8325791591778398

},

{

"max_ts": 1557067992566,

"ts": "2019-05-05T14:00:00.000Z",

"value": 2.2072970522888777

},

{

"max_ts": 1557074881978,

"ts": "2019-05-05T16:00:00.000Z",

"value": 4.9835646623914895

},

{

"max_ts": 1557084348154,

"ts": "2019-05-05T19:00:00.000Z",

"value": 6.907920009381062

}

]

So lots of data points missing, hence I was wondering if this has been processed or averaged.

I suppose if I ask my question a different way, how can I get all of the raw data ( such as obd.driving_power.value ).

Many thanks,

Pete

Hi Peter

The logbook/storage/read endpoint also takes an interval parameter, currently the default value is 1h, but can be changed to something like 10s.

It’s also possible to retrieve the raw values by using the logbook/raw/ endpoint.

Which takes the following parameters

- device_id

- data_type (obd.driving_power)

- start_utc and end_utc (will be renamed to from and to)

I hope this helps you.

Best regards

/Malte

Great, many thanks, both of these work

Hello!

I am trying to retrieve some data with no luck so far.

Reading the comments I understand that the API documentation portal has recently changed to a Swagger based API, so the first way explained by @Malte seems to be no longer applicable. How should it be done in the new portal?

I am also trying to do it using Python and the requests library:

import requests

headers = {'Content-Type': 'application/json',

'email': '*****',

'password': '*****'}

api_url = 'https://api.autopi.io/auth/login/'

response = requests.post(api_url, headers=headers)

if response.status_code == 200:

print(json.loads(response.content.decode('utf-8')))

else:

print(response.status_code)

Obtaining error code 400 (“Bad request”). What am I doing wrong?

Thanks in advance.

Best,

Iker

EDIT: I found what my mistake was in the above Python code. Instead of headers, keyword data should be used: response = requests.post(api_url, data=headers). Still would appreciate feedback about how to work with the Swagger API

Hi Malte,

I am trying to build a custom application that reviews driver behaviour using obd data plus device sensor data. I am trying to get a score based on Speed, Tripdata, RPM, Acceleration and Deceleration etc. Now the challenge I have regarding the API at the moment it that I can get trip data for example, but the rest I am not sure how to retrieve. Is there perhaps a raw file where everything is under loggers is recorded, that I can access via API? Is that what logbook/raw/ is supposed to do, I am getting nothing back from it at the moment. Also how do I know which of the APIs are accessible and which are not? Is everything on https://api.autopi.io/ accessible?

Thanks.

Best Regards,

Moses

Hi

From your code, it looks like you mixed up the data and the headers.

It should instead look something like this

import requests

headers = {'Content-Type': 'application/json' }

data = {'email': '*****', 'password': '*****'}

api_url = 'https://api.autopi.io/auth/login/'

response = requests.post(api_url, headers=headers, data=data)

if response.status_code == 200:

print(json.loads(response.content.decode('utf-8')))

else:

print(response.status_code)

Hope this helps.